Jacek Duda, Cadence

After 20 years of continuous improvements in performance and in performance alone - which became dull and, at some point, slowed the transition significantly - USB has become exciting again. Last year we saw the introduction of three specifications, two of which were major updates (USB 3.1, USB Power Delivery 2.0) and one that marked the start of an industry-wide revolution (USB Type-C Cable and Connector 1.0, now 1.1). In this article, let's take a closer look at the application architecture impact of the USB 3.1 specification - namely, the changes that need to be introduced to take advantage of the improved bandwidth that you can get from integrating USB 3.1 Gen 2 support in your designs.

With USB 3.1 Gen 2, you can take advantage of a maximum data rate that has increased to 10Gbps, compared to 5Gbps with USB 3.1 Gen 1 over the same signal pair. Under the hood, however, what this means is that devices supporting two different speeds will use exactly the same lanes, which calls for some architectural tweaks to make sure the bus is saturated, especially when both Gen 1 and Gen 2 devices are transferring data at the same time.

Addressing PHY Changes

From a design standpoint, the better bandwidth comes with a need to address some key changes in various layers of USB application. One of the biggest changes is the introduction of a new encoding stream. USB 3.1 Gen 1 featured 8-bit by 10-bit encoding - for every 10 bits of data, there were 2 bits of overhead, so overhead is pretty high already at the start. With USB 3.1 Gen 2, however, the new encoding scheme of 128 bits by 132 bits results in significantly less overhead and in bandwidth that is much closer to the theoretical maximum of 10Gbps. The 4-bit headers can be of two types - control (1100) or data block (0011). Such a distinction has two major advantages - first, a single-bit error is self-correctable by the design; second, it preserves the link layer architecture with minimal change.

The fact that both USB 3.1 Gen 1 and 2 use the same lanes forced USB Implementers Forum (USB-IF) to develop a more effective method of discovering the maximum speed at which the link between two devices can operate. The already existing low frequency periodic signal (LFPS) was modified into the LFPS-based Pulse Width Modulation Messaging (LBPM) to make sure the devices can talk to each other and negotiate speed even before the link is trained. Therefore, from the very first moment, devices can operate at their maximum speeds, which will be important for future generations of USB, where transfer rates can go to 20Gbps or 40Gbps.

10Gbps frequency increases the chance for channel loss and, therefore, the need to use re-timers. As the target loss for the system in 23dB (8.5dB for both host and device, and cable assembly 6dB), if the device or host loss exceeds 7dB at 5GHz, implementation of a repeater may be necessary.

Link Layer Updates

USB 3.1 Gen 2 link layer introduces a second traffic class for asynchronous data packets so that isochronous transmission can be prioritized and have a higher chance of meeting the bandwidth requirement. The 128-bit/132-bit encoding and its ability to self-recover from single-bit errors allows for the following enhancements for data packets:

- Non-deferred data packet headers can now have their own framing ordered set

- Data packet length field is tolerant for single-bit errors

- Outgoing data packet payload will not be truncated

Multiple INs and Transaction Packet Labeling in Protocol Layer

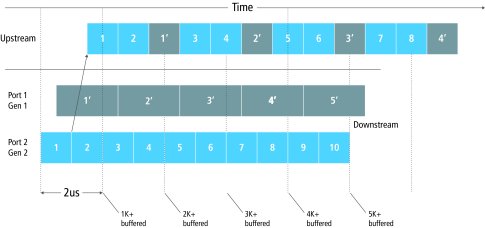

Current generations of USB 3.1 as well as a few future releases of the USB specification will operate on the same lanes. Therefore, there must be a way to optimize the performance so that there is no bandwidth lost when the application is getting data from both faster and slower devices. This is where multiple INs come into play. This is essentially the ability to ask multiple devices for data, one after another, without waiting to receive it. This ability, if supported by both devices and hosts, increases the performance a lot by eliminating the Not Ready responses. Even if supported by a USB 3.1 host only, it enables saturation of the bandwidth and, therefore, secures true 10Gbps performance. In the example shown in Figure 1, the hub or host needs to buffer a USB 3.1 Gen 2 packet every third cycle, but the advantage is continuous saturation of the USB 3.1 Gen 2 bus.

Figure 1: Supporting USB 3.1 Gen 1 and Gen 2 devices with multiple INs

Hub-Specific Changes

Hubs are currently one of the applications that are primarily driving USB 3.1 adoption. In the era of transformation from Type-A/B to Type-C connectors, a USB 3.1 hub can be more than just a bulk adapter for a host of legacy devices - it can also help maximize the performance of a couple of USB 3.1 Gen 1 devices. Introduction of a USB 3.1 10Gbps chipset architecture is imminent and, therefore, the USB 3.1 hubs implemented within display monitors or as standalone devices will help out in saturating the host bandwidth.

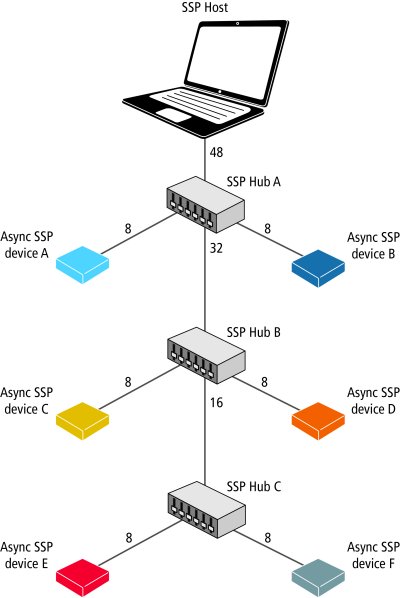

USB-IF is betting that the importance of USB hubs will increase in the future, so it does not want to discourage their use by unfair distribution of weight depending on how far upstream the device is plugged in. Therefore, a per-port round robin algorithm, as shown in Figure 2, was introduced to distribute the weight evenly between all plugged in devices, and the importance is differentiated across speeds that are supported by individual devices. One can expect that in the future, when USB 3.1 Gen 3 20Gbps is introduced, proportionally higher weight will be given to these devices (probably 16, as Gen 2 is 8 and Gen 1 is 4 'points').

Figure 2: In the per-port round-robin algorithm depicted here, each packet gets a weight. Ports take turn forwarding, and ports with heavier packets get proportionally more turns. The initial weight of the packet depends on the speed of the link. The weight of the forwarded packet from the hub is the sum of the weight of all active downstream devices.

Isochronous Performance

The ability for USB to support multiple INs would have a negative impact on isochronous transfer performance, as hubs or hosts would not have a mechanism to prioritize the isochronous traffic over an asynchronous one. This is where mandatory information of traffic type in the packet header and an additional link layer credit type information comes in handy. USB-IF introduced two types of traffic classes (Type 1 and Type 2) to separate the bulk and control transfer types from interrupt and isochronous, and give priority to the latter, so that all USB devices - hosts, hubs, and peripherals - will handle these first, securing the performance requirements.

While USB 3.1 Gen 2 brings the advantage of a 10Gbps maximum data rate, the change does call for some adjustments in your design architecture to ensure that the bus is saturated. To address the changes introduced to all layers and provide functionality enhancements to mitigate the design challenges we’ve discussed, consider implementing pre-verified IP that supports USB 3.1 Gen 2. Indeed, USB is exciting once again!

Jacek Duda is a product marketing manager at Cadence, where he leads marketing efforts for USB IP development, with special focus on controller IP. He has been in the industry for more than 8 years now. He has a master’s degree in IT marketing from the University of Economics in Katowice.