By Prasanna Venkatesh Balasubramaniyan, HCL Tech

Autonomous Vehicles (AV) needs more intelligence when it is on the move. The intelligence is not just an algorithm driven based on multiple sensor inputs alone, but here the intelligence need to be highly situational aware and by keeping the current vehicle dynamics. This needs lot situational and scenario based complex computation and communication with multiple Electronic Control Unit (ECU) within the vehicle. The real challenge is the computation need to happen in real time and react accordingly to avoid unreasonable risks.

Most of the external scenario are sensed 360o typically using multiple Cameras, LiDAR, Radar etc. and different Deep Learning or Deep Neural Network (DNN) algorithms does the perception. Many Advances Driver Assistance Systems (ADAS) gives alerts and assist for better driving experience. In case of L4+ Autonomous Vehicles, the DNN algorithm might also assist Adaptive Cruise Control (ACC) functions and help the vehicle to maneuver smoothly or guide to safe zone in case of unenviable situation.

Today even though the ADAS systems are designed to comply with ISO26262 standard norms to cover electronic and electrical (E/E) safety and respective software components are verified/validated toughly, despite there are chances of failure in the system due to “unknown, unsafe” scenarios. The autonomous vehicles getting exposed to unknown, unsafe situations are possible in the real world environment and even when the ADAS hardware and software are operating correctly. Globally automotive industry had witnessed accident and other mess-up events w.r.to misbehave of autonomous drive technology.

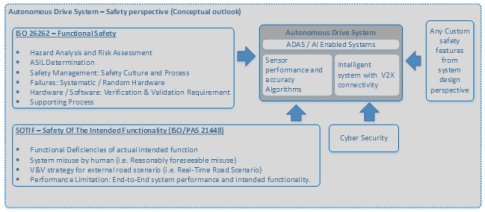

Figure1: Autonomous Drive System – safety perspective (a conceptual outlook)

Few examples are, where the AI enabled systems failure could be due to AI algorithm not interpreting the real-time scenario.

- Inadequateness in the AI enabled system for perception and safe drive operation.

- This could be because inadequate algorithm or inadequate training dataset to address different operating conditions, road scenarios and drive regions.

- Human - Mishandling or Negligence of ADAS function or AI system.

- Driver negligence and no attention for Alerts and Warnings provided (either visual/sound)

- Driver misusing the configuration settings (setting for ADAS system or automated function) which created malfunction of AI system.

- Dynamic change in the road scene surroundings

- Example the static object detected becomes sudden dynamic object

- Unusual objects or holographic image movement etc.

- External Sensor instability / out-of-calibration / external sensor failure (could be intermittent failure)

- The image sensor calibration problem or refresh problem

- Image sensor intermittent failure.

- Sensor performance limitation

SOTIF mindset to overcome engineering defect:

In the latest ECU systems, as the code density and intelligent algorithm increases, the probability of system failure could also increase due software failure rather the electronic and electrical (E/E) system errors, because the latest systems are heavily packed with AI or DNN algorithms. Also there could be possibilities where sophisticated AI enable systems can be further fed with driver bio-metric information with multiple L3+ functions. In these kind of systems, the “scenario to system reaction time” is very crucial.

The SOTIF (Safety Of The Intended Functionality) ISO/PAS 21448 aids to avoid unreasonable risk and hazard resulting due to – Functional Deficiencies of the actual intended function or System misuse by human. The SOTIF can be applied to ADAS systems or any other emergency systems which could lead to safety hazard which many not be due to the system failure.

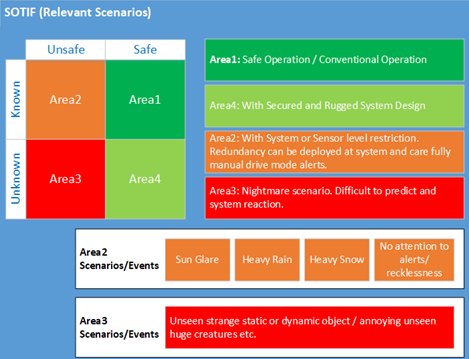

Figure2: SOTIF (Relevant Scenarios) - Outlook

So while designing the AI enabled systems for a specific functionality the design should be done to ensure the intended functionality guaranteeing, the system shall not affect due to Performance Limitation or any Human Misuse. So developing intelligent systems with SOTIF considerations will enable to design systems which are “Situational aware”.

While designing the intelligent systems, the designers should ensure the system (which could comprise of Semiconductors device + AI algorithm + Application software + Sensor interface + HMI controls etc.) shall not have any functional or performance limitations, for which it is designed. To ensure the Performance Limitation the device used + algorithm used + training dataset + hardware platform and the KPI (Key Performance Indicators) are viable and essential to meet the “Intended Functionality without any Functionality deficiency” and required performance and the system reaction time is guaranteed in all possible scenarios.

For example, assume the below systems;

- Example 1: A system requires a multi-sensor, input signal processing followed by a deep neural network inference and update the result in user HMI panel or Digital Instrument Cluster panel with visual and audio alerts.

- Example 2: A system takes multiple camera sensors (with higher frame rate and resolution) does the complex image processing followed by a classification using deep neural network algorithm. Also another algorithm (or) functional blocks uses the classified result + vehicle parameter and does multiple iterations of estimation and generates controls parameters to other ECU and also to HMI panel.

In this kind of intelligent systems, the designer should ensure the algorithm (or) silicon device (or) interface (on-chip/off-chip) (or) the platform deployed, does not create any unintended system behavior because of performance limitation.

SOTIF can be applied and analyzed for various hazard event models, which could cause potential failure possibility that could affect the “intended functionality” or failure due to “Human misuse / mishandling” of user settings or parameters. This methodology will enable the designers to identify all possible situations which could falls under “unknown, unsafe” scenarios and helps to determine the proper system time-budgeting which help to avoid performance limitation issues.

With SOTIF based guidelines and taking environmental scenarios into account, the designers can identify and evaluate scenarios and trigger events which will enable to design ‘Intelligent Situational Aware’ system without overdoing.

For the SOTIF verification and validation approach, the combination of “intended functionality and reasonably foreseeable-misuse (i.e. human misuse)” can be taken into account while identifying the hazard events. Moreover, if the intelligent system involves functions/algorithms which has non-predictive behavior the validation and automation complexity increases. However, the new imaging technology with Intelligent-Sense with AI powered is gaining more momentum. When these type of sensors are deployed in the ADAS system the “sensor performance and accuracy” becomes more vital w.r.to SOFIT where the end-to-end system performance and intended functionality need to be guaranteed in the integrated validation testing.

So a combination of “Real-Time Road Scenario VS Misuse VS System Indented Function” will basically help in deriving and testing the system behavior by appropriately generating the hazard events.

Typically for the Area1 and Area4 scenarios (refer figure2) the normal intended functionality can be deployed and verified. For the Area2 (refer figure2) the independent system behavior (with intended functionality), other random trigger events with associated road scenario, possible misuse of system function, negligence of driver for the system alerts can be simulated and validated at the system level. Also according to the functionality of intelligent system, algorithm deployed and the end-to-end response time could be verified to figure out for performance limitation and to figure out the functional improvements.

For Area3 which falls under “unknown, unsafe” scenarios (refer figure2), the enduring scenarios or trigger events can be generated synthetically on the road environment (for example. Highway, City Drive, Traffic situation, School Zone, Hospital Zone, Men-at-Work, Service lane crossing etc.) and can be evaluated Qualitative & Quantitative to assess the system behavior, as a black-box testing using a Hardware-in-Loop (HIL) setup. This could help in residual scenario testing.

Summary:

While designing an Intelligent System for ADAS or Autonomous Drive system, the vehicles understanding the real-time road environment and becoming “aware and intelligent" is a real challenge. The SOTIF ISO/PAS 21448 will have substantial focus and impact on the Autonomous Vehicles. The Intelligent Systems with artificial intelligence or System which does more perception features will foresee a real benefit in safety validation while adopting to SOTIF considerations. Also validating a system for reasonably foreseen-misuse with road-scenarios will enable to build robust system which uses the real-time data from the V2X. So with ISO/PAS 21448 based verification & validation and deploying the latest Hardware-in-Loop (HIL) testing shall enable to design and validate better sophisticated and intelligent systems.