By Ang Boon Chong, Loh Jih Keat, Phang Eng Hong, Quek Li Chuang, Lee Chia Cheng (Intel Corp)

ABSTRACT

Performance sweeping is one of the important tasks for technology and tool flow methodology (TFM) evaluation. In the past, most of the performance sweep is done selectively and not reuse. Often, performance sweeping is time consuming and manual effort. This paper will share the performance auto sweep flow for technology and tool-flow-methodology evaluation.

1.0 Introduction

Performance sweeping is a common task for technology and tool-flow-methodology (TFM) evaluation. Historically, the method to derive the max performance can be summarized as in Table 1.

| Method | Comment |

| Manual frequency chart | User pre-defined fix interval. Peak performance derived from chart |

| DSO.AI | Through permuton. Need additional tool license. Under utilize the tool’s benefit for technology performance sweep only. |

| Timing Fault Detector | A dynamic verification based |

Table 1 Max Performance Derivation Prior Arts[1]-[2]

This paper will share a unix performance auto sweep methodology. The auto regression IT infrastructure is beyond the scope of this paper.

2.0 Performance Auto Sweep Overview

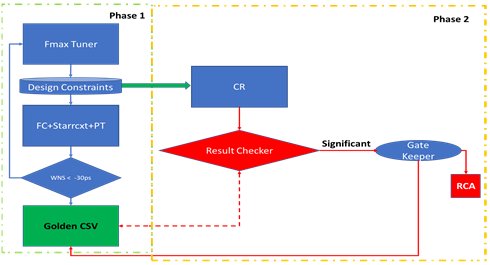

The performance auto sweep overview is shown in Figure 1. It is divided into 2 phases of implementation. The first phase is Fmax tuner. Fmax tuner will auto regress till maximum frequency is reached. This will serve as baseline for particular technology node with process, voltage and temperature (PVT) taken into account. The result obtained from phase 1 will release to phase 2 through golden constraint as shown in Figure 1. The type of central regression(CR) depends inhouse solution used. Central regression is used to check against the golden baseline, for each tool-flow-methodology release or different milestone technology evaluation.

Figure 1: Performance Auto Sweep Overview

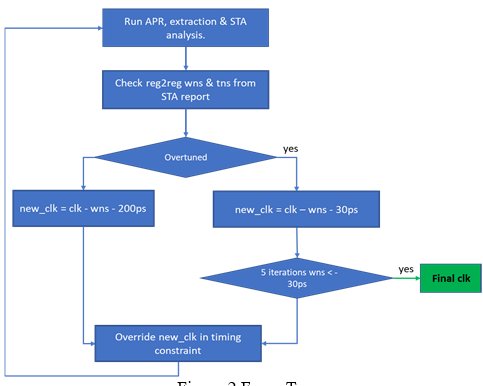

The Fmax tuner flow chart is shown in Figure 2.

Figure 2: Fmax Tuner

At the beginning of the tuning phase, the result is likely to be a positive worst slack. Hence, Fmax tuner will use larger stepping in the max frequency tuning, to reduce the number of iterations needed to reach max frequency. Once it reaches performance saturation for consecutive 5 times, it will stop.

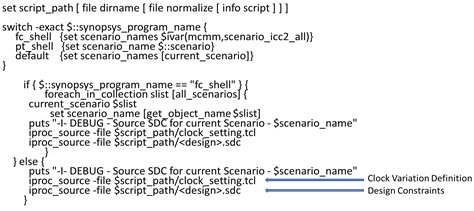

To ease the auto sweep scripting burden, the design constraint is standardized as in Figure 3.

Figure 3: Constraint Partitioning

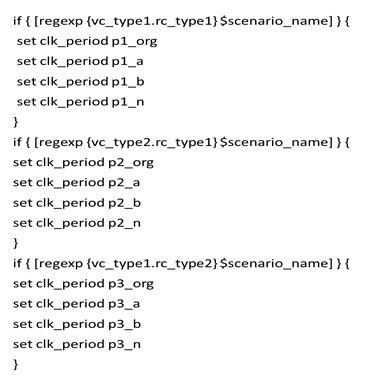

Within the clock constraint file, it is being partition as shown in Figure 4.

Figure 4: Clock Partitioning

As shown in Figure 4, the corner name of scenario is used to define the performance target for each corner. The corner definition is concatenation of voltage and RC corner. The p1_org, p2_org and p3_org is the original constraint for each corner. It can be same value initially as the design may just define 1 performance target initially. The performance auto sweep flow will update the performance target for each corner after each loops. Hence user can trace history of clock period changes from clock constraint file as well.

In phase 2 implementation, the result checker is part of the central regression IT infrastructure feature. The tolerance range for each performance metric as pass-fail can be defined by the deployment team. One note is, the out of range also include significant improvement instead od degradation only. As the tool bug may potentially triggers design over optimization through logic elimination and achieve unexpected performance gain. One sign of such event is the logic equivalent check will fail. The root-cause-analysis (RCA) is manual for now. When significant RCA data is available, it will serve as future RCA-AI[3] work internally.

3.0 Results

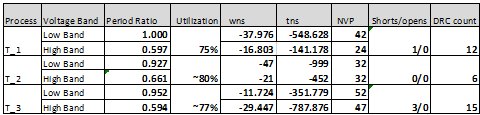

A sample of tabulated performance data of competing technology is shown in Table 2 and Table 3. The technology name, voltage and clock period are labeled for confidential reason.

Table 2: Tabulated Golden Result For Timing And Physical DRC

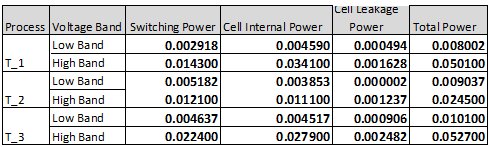

Table 3: Tabulated Golden Result For Power

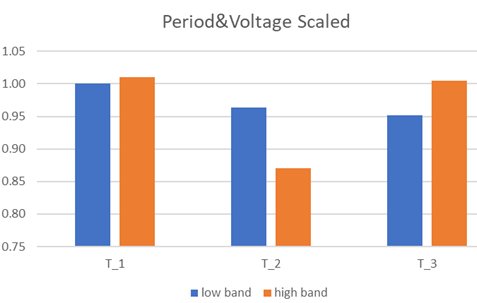

From Table 2, the period for each technology is scaled to the same base reference of 1. Another note is the value for high band and low band voltage of each technology is not same. If the data from Table 2 is further normalized with voltage scaling effect, the result is shown in Figure 5. From Figure 5, it is obvious that high band performance is inferior for T_2 technology.

Figure 5: Scaled High Band And Low Band Performance

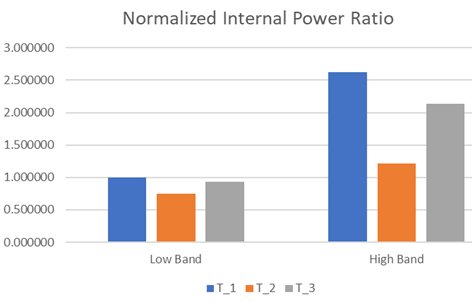

For Table 3, the low band for T_2 is not at same temperature compared to the rest. Hence leakage data for T_2 cannot be compared directly to T_1 and T_3 low band. If the internal power is scaled with performance and voltage consideration, it is as shown in Figure 6. From Figure 6, it is shown that T_2 technology has better power efficiency for both high band and low band voltage. Hence T_2 is better power-performance efficient for battery application.

Figure 6: Scaled High Band And Low Band Internal Power

4.0 Summary

Through the enablement of performance auto sweep, it eases the manual effort to search for optimal maximum performance curve for each technology and tool-flow-methodology release. This will allow designers to focus on high value task and have meaningful discussion with various stake holders.

5.0 Acknowledgements

Thanks to Koh Jid Ian, Loe Shih Yhui, Tang Linm Ern, Poh Yen Chiao, Nordin Nor Farahanim for the contribution for the performance auto sweep enablement

6.0 References

[1] Design Space Optimizer AI https://www.synopsys.com/implementation-and-signoff/ml-ai-design/dso-ai.html

[2] Edward Stott, Joshua M Levine, Peter Y.K. Cheung, Nachiket Kapre, “Timing Fault Detection in FPGA-Based Circuits”, IEEE 22nd Annual International Symposium on Field Programmable Custom Computing Machines, May 2014

[3] Julen Kahles, Juha Torronen, Timo Huuhtanen, Alex Jung, “Automating Root Cause Analysis via Machine Learning in Agile Software Testing Environments” IEEE Conference on Software Testing, Validation and Verification (ICST) , 22-27 April 2019.