By PLDA

Computer systems as we know them have been built on the paradigm that the CPU-memory pair is fast while network and storage are slow. Over the years, these components developed their own language and interfaces that require layers of software to translate memory commands into network and storage commands and vice versa.

Until now, the speed of the CPU-memory pair relative to network and storage I/O was such that these software layers had minimal impact on system performance.

However, with Moore’s law in full effect, network and storage technologies are quickly catching up with CPU-memory speeds and the burden of generations of software layers now becomes significant.

In this article we look at the Gen-Z fabric as a solution to eliminate existing system bottlenecks and significantly improve system efficiency and performance by unifying communication paths and simplifying software using the CPU-memory load/store language throughout.

Towards a new computing architecture

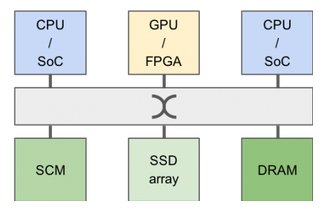

As illustrated in Figure 1, computing architectures are quickly evolving towards heterogeneous systems that include a variety of compute elements (CPU/SoC, GPU, FPGA) and different types of memory/storage elements (DRAM, Storage Class Memory), interconnected together locally or remotely.

Such architecture should provide flexibility and scalability by allowing the addition or removal of resources, or the replacement of such resources as newer versions or newer technologies become available.

Figure 1 - Towards a new computing architecture

The CPU-centric approach

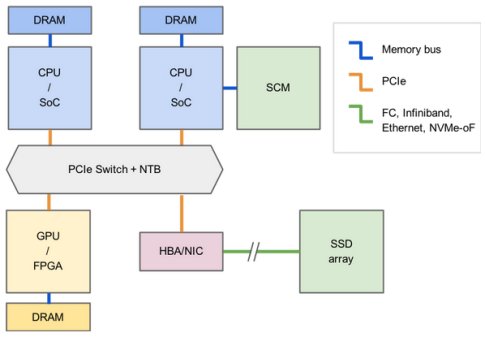

With today’s CPU-memory centric approach, the system in Figure 1 would be implemented using a variety of silicon components, interfaces, and software layers as shown in Figure 2.

Figure 2 - CPU-memory centric system architecture

In this particular implementation of the computing system, PCI Express is used as the main interconnect between CPU-attached memories, GPU/FPGA-attached memories, and the high performance/low latency Storage Class Memory. The SSD array is connected over a Host Bus Adapter or NIC utilizing either Fiber Channel, Infiniband, NVMe-oF or Ethernet as the transport interface.

Data in one of the CPU’s DRAM has to traverse 4 interface domains before reaching the SSD array, with the associated software overhead and buffer copy operations that ensue.

Additionally, scalability would be a concern: upgrading to next generation SCM is likely to require an upgrade/replacement of the associated CPU/SoC; likewise, expanding the SSD array may require a fabric switch downstream of the HBA/NIC.

The memory-semantic approach

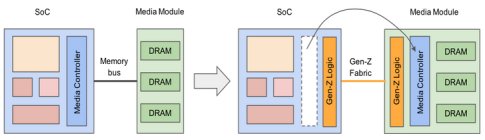

Gen-Z is a memory-semantic fabric that extends the CPU-memory byte-addressable load/store model to the entire system. The load/store model has proven to be the fastest and most effective way for a CPU to communicate with a memory subsystem. To enable this model, Gen-Z decouples the compute from the media, placing the media-specific functionality with the media where it rightfully belongs. Figure 3 illustrates this principle.

Figure 3 - From CPU-memory interface to media-agnostic fabric

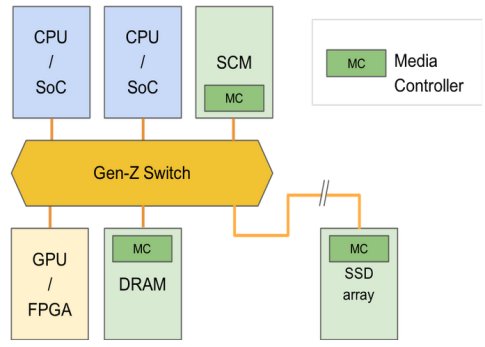

This important change allows for every compute entity in the system to be media agnostic and disaggregated. With a Gen-Z memory-semantic fabric, the system in Figure 1 could be implemented using a switched topology, as shown in Figure 4.

Figure 4 - System architecture with Gen-Z

Through this approach, all devices are peers to one another and speak the same load/store language through simplified, high performance, low latency communication paths without incurring the translation penalties and software overhead of current bus architectures.

The Gen-Z protocol defines a wide array of memory semantic operations (through OpCodes/OpClasses) that enable efficient data transfers to offload compute resources, optimize interconnect usage, and reduce software overhead. In the example shown, data from DRAM could be copied to the SSD array in one load and one store operation using the appropriate OpCode/OpClass.

In term of scalability, the system can be exactly tailored to every workload and environment by upgrading, adding or removing compute, memory, or storage elements independently and without impact on functionality.

About Gen-Z

The Gen-Z architecture is focused on delivering high efficiency, high bandwidth, and low-latency.

High efficiency is achieved by capitalizing on the proven load/store model. The Gen-Z hardware interface layer is simplified which in turn minimizes the need for software layers. Removing this complexity, overhead, and induced system latency can dramatically improve system performance.

High bandwidth is achieved in two ways. Gen-Z supports asymmetric communication paths, meaning more lanes can be dedicated to the read path than the write path or vice versa. In addition, Gen-Z supports multiple signaling rates that include 16, 25, 32, 56, and 112 GT/s. Altogether these capabilities will allow Gen-Z to keep pace with the industry’s ever growing need for speed while also allowing Gen-Z communication paths to be tuned to specific workload traffic patterns.

Low-latency is achieved by mean of reduced software stacks. Unlike traditional server storage and network stacks that are heavily layered, adding significant latency to communications, Gen-Z operates with a lightweight software interface that performs memory reads and writes directly to the hardware.

Gen-Z for chip designers

Chip designers looking to successfully develop Gen-Z products need several key ingredients, as detailed hereafter:

- Gen-Z controller IP: SoCs, Switches, storage media controllers and other types of Gen-Z devices all require configurable, high quality controller IP to enable connection to the Gen-Z fabric. At the time of this writing, two IP vendors, members of the Gen-Z consortium have announced current and future availability of Gen-Z controller IP.

- Gen-Z PHY IP: Initial Gen-Z implementations are set to focus on proven, deployed NRZ PHY signaling technology and speeds, leveraging the availability of PCIe PHY at 16 and 32 GT/s and IEEE802.3 PHY at 25 GT/s. Later deployments are likely to leverage advanced PAM4 PHY signaling rates such as 56 and 112 GT/s.

- Gen-Z Verification IP: Availability of comprehensive Verification IP (VIP) tools is essential in guaranteeing the quality of the Gen-Z IP before and after integration in a SoC. At the time of this writing, two vendors have announced availability of Verification IP for Gen-Z.

- FPGA prototyping boards: FPGA prototyping is a necessary step in ensuring functionality and interoperability at the system level. Current FPGA technology allows for prototyping Gen-Z up to 56 GT/s (PAM4) and 32 GT/s (NRZ). Connectors are also being developed to enable multi-lane Gen-Z signaling at these rates, over copper and optical connections. FPGA prototyping boards are available from multiple vendors and it is expected that Gen-Z specific prototyping platforms based on FPGA technology will become available soon.

The Gen-Z consortium includes members from every segment in the technology space. This ecosystem is essential to build a viable product ecosystem where all the necessary hardware and software components interoperate with one another.

Closing statement

Gen-Z offers a unique opportunity for the computer industry to redefine modern computing and overcome current challenges with the existing CPU-memory paradigm. As new companies continue to join the growing Gen-Z open ecosystem, the availability of building blocks, products and services will naturally increase and enable new designs and products to address new workloads and emerging challenges.

Gen-Z provides opportunities for innovative high performance, low-latency solutions that will be open, efficient, simple, and cost effective.

Learn More about PLDA's Gen-Z IP