55nmHV MTP Non Volatile Memory for Standard CMOS Logic Process

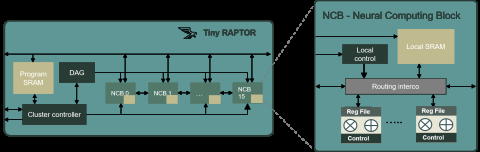

Deep Neural Network Programmable Accelerator

It helps to reduce the inference time needed to run Machine Learning (ML) Neural Networks (NN).

TinyRaptor is particularly well suited for edge computing applications on embedded platforms with both high-performance and low-power requirements.

View Deep Neural Network Programmable Accelerator full description to...

- see the entire Deep Neural Network Programmable Accelerator datasheet

- get in contact with Deep Neural Network Programmable Accelerator Supplier

Block Diagram of the Deep Neural Network Programmable Accelerator IP Core

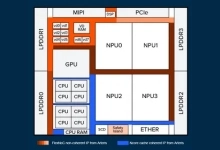

NPU IP

- NPU IP family for generative and classic AI with highest power efficiency, scalable and future proof

- General Purpose Neural Processing Unit (NPU)

- AI accelerator (NPU) IP - 1 to 20 TOPS

- AI accelerator (NPU) IP - 16 to 32 TOPS

- AI accelerator (NPU) IP - 32 to 128 TOPS

- ARC NPX Neural Processing Unit (NPU) IP supports the latest, most complex neural network models and addresses demands for real-time compute with ultra-low power consumption for AI applications